The GSMA, in collaboration with Khalifa University, has announced the launch of GSMA Open-Telco LLM Benchmarks 2.0, a major industry initiative designed to evaluate how effectively large language models (LLMs) perform on real-world telecommunications use cases.

Released through the GSMA Foundry and hosted on the Hugging Face platform, the updated benchmark provides the telecom industry with a transparent, standardised framework to assess whether AI models possess the deep domain knowledge required for operational network environments.

Raising the Bar for Telecom-Grade AI

As communications service providers accelerate investments in AI-driven automation, ensuring reliability, accuracy, and standards compliance has become essential. Generic AI benchmarks fall short when applied to telecom networks, where errors can directly impact service availability, customer experience, and operational costs.

Open-Telco LLM Benchmarks 2.0 directly addresses this gap by testing AI models against telecom-specific operational challenges, enabling operators to make evidence-based decisions when positioning AI across mission-critical systems.

Real-World Telecom Use Cases at the Core

The benchmark evaluates LLMs across 34 operator-submitted use cases, covering eight strategic domains, including:

- Network management and configuration

- Radio Access Network (RAN) optimization

- Network troubleshooting and fault resolution

- Forecasting and planning

- Customer support and knowledge retrieval

These use cases reflect real operational scenarios faced by mobile network operators, ensuring the results are both practical and actionable.

Five Telecom-Focused Evaluation Dimensions

Open-Telco LLM Benchmarks 2.0 assesses AI models across five specialised datasets developed by the industry and research community:

- TeleYAML: Converts operator intent into 5G Core configurations

- TeleLogs: Identifies root causes from network logs and telemetry

- TeleMATH: Solves telecom engineering math problems

- 3GPP-TSG: Understands and interprets telecom standards

- TeleQnA: Answers telecom-specific questions

Together, these dimensions evaluate whether AI models can support automation, diagnostics, and decision-making within live telecom networks.

Global Operator and Research Collaboration

The Open-Telco LLM initiative brings together 15 mobile network operators, including AT&T, China Telecom, Deutsche Telekom, Orange, Telefónica, Vodafone, and the UAE’s du.

The project also includes participation from leading academic institutions and technology organisations, reinforcing its role as a neutral, industry-driven benchmark.

Two dedicated working groups support the initiative:

- Network Management and Configuration, co-led by Khalifa University

- Network Troubleshooting, co-led by AT&T and Huawei

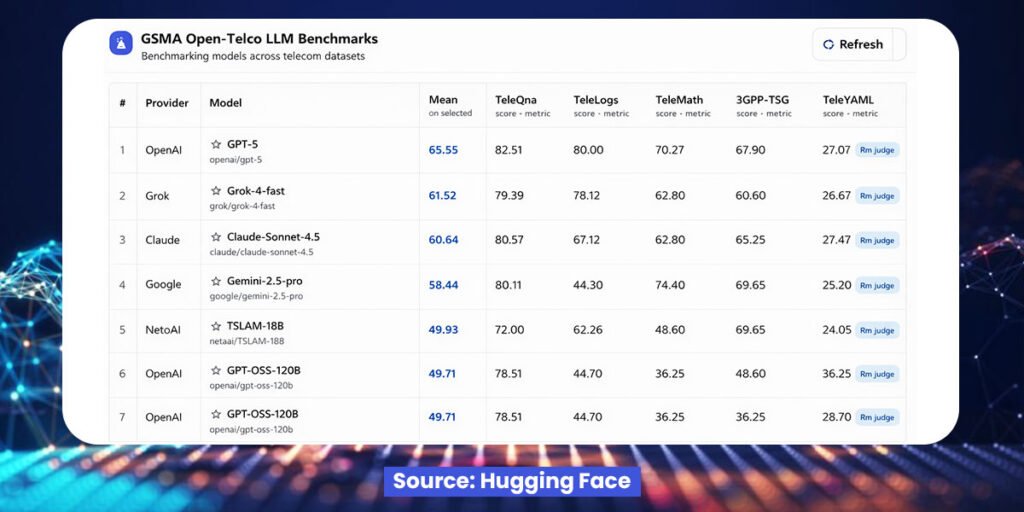

Key Insights from Benchmark Results

Early benchmark results indicate that frontier general-purpose models currently lead overall performance, while domain-tuned telecom models show strong results in specialised tasks such as troubleshooting and standards comprehension.

At the same time, the benchmark highlights persistent challenges, particularly in intent-to-configuration workflows, where translating natural-language intents into fully standards-compliant network configurations remains difficult across all models.

These insights offer valuable guidance for operators planning AI adoption and for vendors developing telecom-specific AI solutions.

A Foundation for Responsible AI in Telecom

By providing a shared, open evaluation framework, GSMA and Khalifa University are helping the telecom industry move toward safe, scalable, and trustworthy AI deployment.

Open-Telco LLM Benchmarks 2.0 enables clearer comparisons, reduces reliance on marketing claims, and supports informed decision-making across the ecosystem.